Quick Answer (TL;DR)

This free PowerPoint template plans AI/ML operations infrastructure across five domains: Model Serving, Monitoring & Observability, Retraining Pipelines, Cost Management, and Experimentation. Each domain has initiative cards tracking infrastructure maturity, cost impact, and delivery milestones. Download the .pptx, assess your current MLOps maturity against each domain, and build a phased plan to move from manual model deployments to automated, observable, cost-efficient AI operations.

What This Template Includes

- Cover slide. Product name, ML platform team, number of production models, and current monthly AI inference spend.

- Instructions slide. How to assess MLOps maturity, prioritize infrastructure investments, and track cost efficiency gains. Remove before presenting.

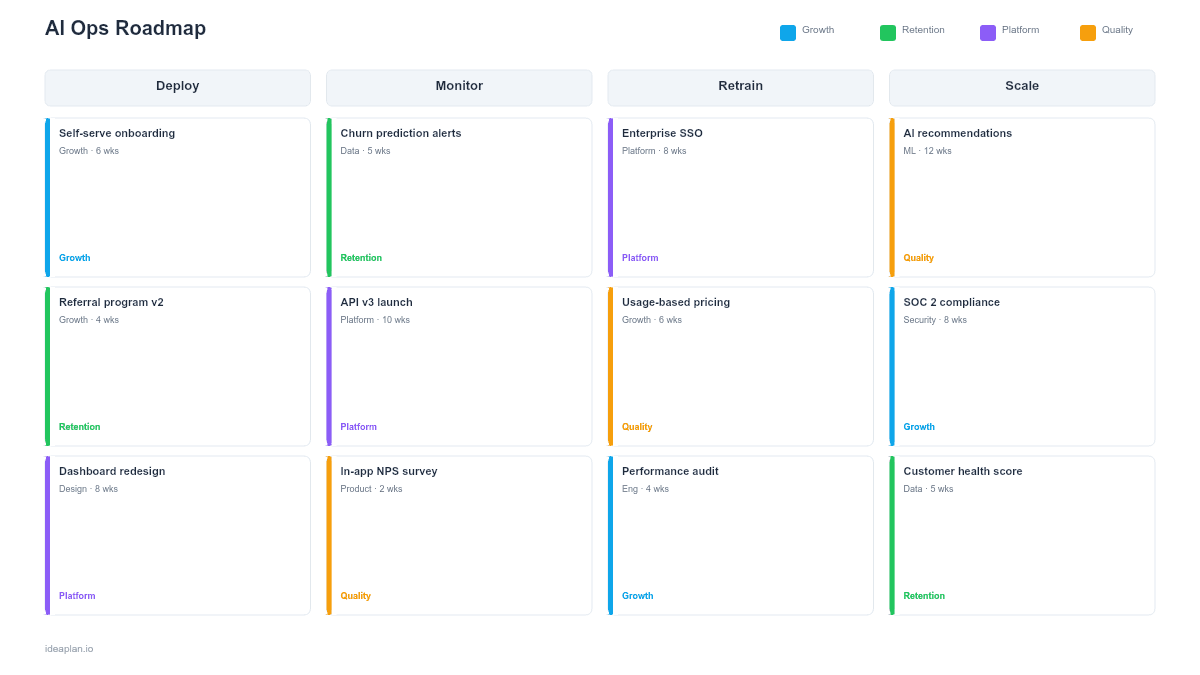

- Blank AI ops roadmap slide. Five domain rows (Model Serving, Monitoring, Retraining, Cost Management, Experimentation) with initiative cards on a quarterly timeline and a maturity level indicator (Manual, Automated, Optimized) for each domain.

- Filled example slide. A growth-stage SaaS company's AI ops roadmap showing GPU serving optimization, drift detection deployment, weekly retraining pipeline for the recommendation engine, per-model cost attribution dashboards, and shadow mode A/B testing framework.

Why AI Ops Deserves a Dedicated Roadmap

Shipping a model to production is 20% of the ML work. The remaining 80% is operations: serving it reliably, monitoring for degradation, retraining when performance drops, managing inference costs that scale with usage, and running experiments to validate improvements. Most teams treat operations as an afterthought and pay for it with production incidents, runaway costs, and models that silently degrade.

AI ops infrastructure compounds. A monitoring system that catches model drift early prevents user-facing quality drops. A retraining pipeline that runs automatically eliminates the manual toil that causes teams to skip retraining cycles. Cost attribution at the model level reveals which AI features are worth their inference spend and which should be simplified or removed.

The AI product lifecycle framework covers the end-to-end model journey. This template focuses specifically on the operational infrastructure that keeps production AI systems healthy and cost-efficient.

Template Structure

Five Operations Domains

Rows represent the core operational capabilities:

- Model Serving. Inference infrastructure, latency optimization, GPU/CPU allocation, autoscaling, model versioning, canary deployments, and fallback routing. This domain answers: can the model serve predictions reliably at production scale?

- Monitoring & Observability. Performance dashboards, drift detection, alert thresholds for eval pass rate degradation, logging pipelines, and audit trails. This domain answers: do we know when a model starts failing?

- Retraining Pipelines. Automated data collection for retraining, training job orchestration, evaluation gates before promotion, and rollback procedures. This domain answers: can we update models without manual heroics?

- Cost Management. Per-model cost attribution, token cost per interaction tracking, spend alerts, right-sizing inference hardware, and cost-performance tradeoff analysis. This domain answers: do we know what AI costs and whether it is worth it?

- Experimentation. A/B testing framework for model variants, shadow mode deployment (new model runs alongside production without affecting users), offline evaluation pipelines, and experiment tracking. This domain answers: can we measure whether a new model is actually better?

Initiative Cards

Each card contains:

- Initiative name. Specific infrastructure investment (e.g., "Deploy drift detection for recommendation model").

- Domain maturity target. Which maturity level this initiative moves the domain toward (Manual, Automated, or Optimized).

- Models affected. Which production models benefit from this infrastructure.

- Cost impact. Expected cost savings or cost to implement, making the business case visible.

- Owner and timeline. Platform team member and target quarter.

Maturity Level Indicators

Each domain row has a current maturity marker: Manual (ad-hoc processes, no automation), Automated (pipelines exist and run without intervention), or Optimized (automated with cost efficiency and performance tuning). The roadmap shows where each domain is today and where it will be by quarter end.

How to Use This Template

1. Inventory production models and their operational state

List every model in production with its current serving setup, monitoring coverage, retraining frequency, monthly cost, and last experiment date. Most teams discover models running in production with zero monitoring and manual retraining schedules that slipped months ago.

2. Assess maturity per domain

For each of the five domains, rate your current maturity as Manual, Automated, or Optimized. Be honest. A monitoring dashboard that nobody checks is effectively Manual. A retraining pipeline that requires an engineer to trigger it is Manual with automation potential, not Automated.

3. Prioritize by pain and model count

Invest first in the domain causing the most production pain for the most models. If three models have degraded without anyone noticing, Monitoring is the priority. If inference costs doubled last quarter with no corresponding value increase, Cost Management comes first. The AI cost per output metric helps quantify the cost management opportunity.

4. Build shared infrastructure before model-specific tooling

A drift detection system that works for all models is more valuable than a custom monitoring solution for one model. Prioritize platform-level investments that serve the entire model portfolio over point solutions for individual models.

5. Review monthly with the ML platform team

Use this roadmap in monthly platform team reviews to track infrastructure maturity progression, cost trends, and upcoming model launches that will need operational support. Align with the product team on which new AI features are coming so infrastructure is ready before models hit production.

When to Use This Template

An AI ops roadmap is the right format when:

- Multiple models are in production and operational maturity varies widely across them

- Inference costs are growing faster than the value AI features deliver

- Model quality incidents (degradation, drift, outages) occur because monitoring gaps allow problems to go undetected

- Manual toil for model deployments, retraining, and experiments is consuming ML engineering capacity

- New AI features are planned and the platform needs to be ready to support them at launch

If you are building your first AI feature and do not yet have production models, the AI feature roadmap template is more appropriate. For the broader ML project lifecycle including data readiness and experimentation, the machine learning roadmap template covers the full picture.

Featured in

This template is featured in AI and Machine Learning Roadmap Templates, a curated collection of roadmap templates for this use case.

Key Takeaways

- AI ops spans five domains: Model Serving, Monitoring, Retraining, Cost Management, and Experimentation.

- Assess maturity per domain (Manual, Automated, Optimized) to identify the highest-value infrastructure investments.

- Shared platform infrastructure that serves all models is more valuable than model-specific tooling.

- Cost attribution at the model level is essential for understanding whether AI features justify their inference spend.

- Monthly reviews tracking maturity progression prevent AI operations from becoming an afterthought.

- Compatible with Google Slides, Keynote, and LibreOffice Impress. Upload the

.pptxto Google Drive to edit collaboratively in your browser.