Quick Answer (TL;DR)

This free PowerPoint template maps AI governance initiatives across four pillars: Policy & Standards, Review Processes, Model Registry, and Compliance Monitoring. Each initiative card tracks the responsible owner, affected AI systems, regulatory requirements, and implementation status. Download the .pptx, fill in your organization's AI systems and regulatory obligations, and use it to show leadership that AI governance follows a structured rollout rather than a reactive scramble after something goes wrong.

What This Template Includes

- Cover slide. Organization name, AI governance program scope, and the governance lead or chief AI officer responsible for the program.

- Instructions slide. How to map AI systems to governance requirements, assign review cadences, and track policy adoption. Remove before presenting.

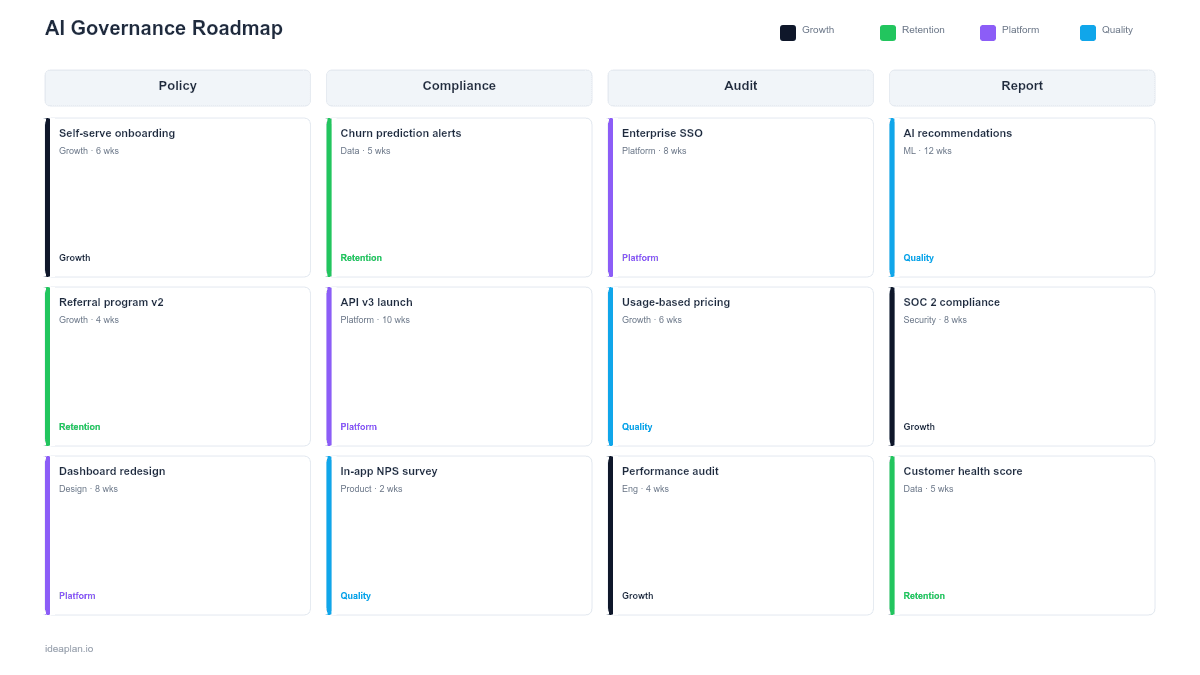

- Blank governance roadmap slide. Four pillars (Policy & Standards, Review Processes, Model Registry, Compliance Monitoring) with initiative cards, regulatory requirement tags, and a quarterly timeline overlay.

- Filled example slide. A mid-size SaaS company's governance roadmap showing acceptable use policy rollout, AI review board formation, model registry implementation, and automated bias monitoring across two quarters.

Why AI Governance Needs Its Own Roadmap

Most product teams bolt governance onto existing compliance programs as an afterthought. That approach breaks down when AI systems scale past a handful of models. A recommendation engine trained on user behavior has different governance needs than a customer support chatbot generating free-text responses. A model accuracy score might satisfy quality requirements but say nothing about fairness or privacy.

AI governance requires coordinating across legal, engineering, product, and data science teams on policies that do not yet have established industry standards. The EU AI Act, NIST AI Risk Management Framework, and internal corporate policies each impose different requirements on different AI system risk levels. Without a dedicated roadmap, governance work gets deprioritized in favor of feature development until a regulatory inquiry or public incident forces action.

The responsible AI framework provides the underlying principles. This template turns those principles into a sequenced implementation plan.

Template Structure

Four Governance Pillars

Columns represent the governance domains:

- Policy & Standards. Acceptable use policies, model development standards, data usage guidelines, and documentation requirements. These set the rules that other pillars enforce.

- Review Processes. AI review board formation, pre-deployment review checklists, periodic audit schedules, and incident response procedures. These ensure AI systems are evaluated before and after launch.

- Model Registry. A centralized inventory of all AI models with metadata: training data sources, performance metrics, risk classification, and ownership. Without a registry, organizations cannot answer basic questions about what AI they are running.

- Compliance Monitoring. Automated monitoring for bias, drift, and regulatory compliance. Dashboards tracking hallucination rate and fairness metrics across production systems.

Initiative Cards

Each card contains:

- Initiative name. The specific governance action (e.g., "Establish AI review board" or "Deploy bias monitoring for recommendation engine").

- Owner. The team or individual responsible for delivery.

- Affected systems. Which AI models or features this initiative covers.

- Regulatory mapping. Which regulations or frameworks require this (EU AI Act, NIST RMF, SOC 2 AI controls).

- Status. Not started, in progress, implemented, or under review.

Risk Classification Band

A top band classifies each AI system by risk level (minimal, limited, high, unacceptable) aligned with EU AI Act categories. High-risk systems need all four governance pillars active before deployment. Minimal-risk systems may only require registry entry and basic monitoring.

How to Use This Template

1. Inventory all AI systems

List every model, algorithm, and AI-powered feature in production or development. Include third-party AI services (APIs, embedded models) since governance obligations apply regardless of whether you built the model. Most organizations undercount their AI surface area by 30-50% when they first audit.

2. Classify risk levels

Assign each AI system a risk level based on the decisions it influences, the data it processes, and the consequences of failure. The AI risk assessment framework provides structured criteria for this classification. Systems that affect hiring, credit, healthcare, or safety decisions are high-risk by default.

3. Map regulatory requirements

For each AI system, identify which regulations apply. A system processing EU citizen data needs GDPR compliance. A system making consequential decisions may fall under EU AI Act obligations. Map each requirement to the governance pillar responsible for addressing it.

4. Sequence by risk and regulatory deadlines

High-risk systems get governance infrastructure first. If an EU AI Act compliance deadline is approaching, the model registry and review processes for affected systems take priority. Lower-risk systems follow once the governance foundation is in place.

5. Review quarterly with the governance board

Use this slide as the standing agenda item for AI governance reviews. Track which policies are adopted, which systems have completed review, and which monitoring gaps remain. The stakeholder management guide helps structure these cross-functional reviews.

When to Use This Template

An AI governance roadmap is the right format when:

- Multiple AI systems are in production and no centralized inventory or review process exists

- Regulatory pressure from the EU AI Act, industry-specific rules, or customer audits requires documented governance

- Leadership needs visibility into AI risk exposure across the organization

- Cross-functional coordination between legal, engineering, data science, and product teams requires a shared plan

- Third-party AI vendors are embedded in the product and governance obligations extend to their models

If you have a single AI feature with clear, limited scope, the AI feature roadmap template is more appropriate. For compliance-specific timelines without the broader governance structure, the regulatory compliance roadmap focuses on audit milestones.

Featured in

This template is featured in AI and Machine Learning Roadmap Templates, a curated collection of roadmap templates for this use case.

Key Takeaways

- AI governance spans four pillars: Policy & Standards, Review Processes, Model Registry, and Compliance Monitoring.

- Every AI system needs a risk classification that determines the depth of governance required.

- Third-party AI services carry the same governance obligations as internally built models.

- Sequence governance rollout by risk level and regulatory deadlines, starting with high-risk systems.

- Quarterly governance board reviews using this roadmap keep cross-functional teams aligned and accountable.

- Compatible with Google Slides, Keynote, and LibreOffice Impress. Upload the

.pptxto Google Drive to edit collaboratively in your browser.